Research

Your AI doesn't have a model problem. It has a context window problem.

Introducing Reasonara, a new memory layer for agentic AI. With an infinitely scalable token context window — verified at 125M tokens — Reasonara addresses the root cause of enterprise AI failure: not the model, but the memory infrastructure behind it.

Repeating the wrong conversation

Most of the conversation around enterprise AI is about which models to use: ChatGPT, Claude, and/or Gemini? Should they go open-source or closed-source? Bigger context window or smaller? It's the wrong conversation.

Models are the easy part of an AI deployment. Every company has access to roughly the same ones. The hard part is ensuring your chosen models can remember the details of your business.

The technical term for "what the AI knows about your business" is "memory and context." Memory is where most enterprise AI projects quietly fall apart. Not because the model is dumb. Because it has nothing to be smart with.

We've been working on this problem. If you want to understand what we mean by a memory problem, look at where enterprise AI actually breaks.

Where Enterprise AI Actually Breaks

A widely cited MIT NANDA study estimated the failure rate of enterprise AI pilots at around 95%. With this adoption problem, we want to know why. The answer, in most cases, isn't that the model couldn't reason. It's that the model couldn't find the context.

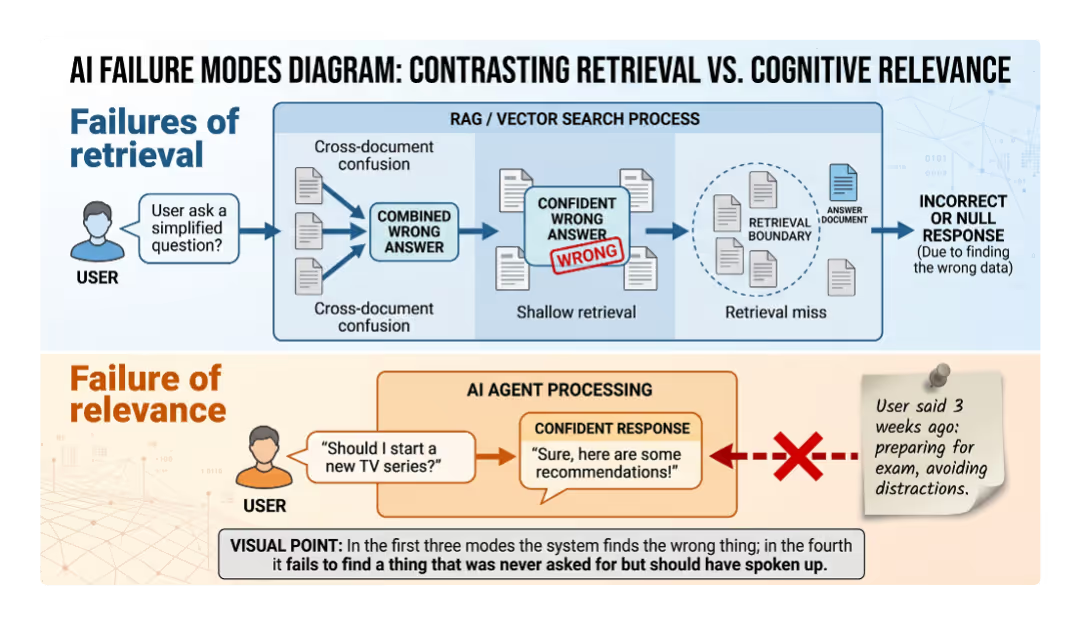

We have a name for this: context rot. It shows up in three recurring failure modes inside real enterprise deployments.

- Cross-document confusion. The AI blends information from multiple sources that look related but aren't. Ask a question like "What programming language does the billing service use?" and the system pulls documentation from three different microservices. It gives you an averaged-out answer that's true of none of them. The retrieval looks confident. Worse, you would never catch it.

- Shallow retrieval that leads to hallucination. The AI finds passages that sound relevant to the question, generates a fluent, sourced-looking answer, and is confidently wrong. LlamaIndex's own benchmarks show this pattern in 15–20% of complex enterprise queries. The system doesn't know it's wrong because it doesn't know what wrong looks like.

- Complete retrieval misses. Sometimes the answer simply isn't in what the AI retrieved. The right document exists somewhere in the enterprise. The retrieval just didn't find it. The system doesn't know what it doesn't know; neither does the user.

All three failures share a root cause. Today's AI can't reliably navigate enterprise knowledge because it doesn't have the right kind of supporting memory structure. They have storage and search. But they don't have memory in any meaningful sense.

Harvard Business Review made the larger point cleanly last year: when every company can use the same AI models, context becomes the competitive advantage. Anthropic's March 2026 labor-market report sharpened it further. Large language models (LLMs) can theoretically automate ~57% of tasks in computer and math roles, but <2% of actual work has been affected. The gap between 57% potential and 2% reality is mostly a memory infrastructure problem.

Gartner reached a similar conclusion in February 2026, naming "context graphs," structured memory layers that sit between raw data and AI models, as essential infrastructure for agentic systems.

The Fourth Failure Mode: Memory That Should Have Spoken Up

The failures above all involve a question being asked and an incorrect answer being returned. There's a fourth failure mode that's harder to see, more damaging in practice, and almost entirely unaddressed by current memory systems.

Consider this simple case. A user told an AI assistant several weeks ago that they're preparing for an important certification exam and want to avoid distractions until it's over. Many unrelated conversations later, they ask the assistant: "Should I start watching the new season of that show everyone's talking about?"

Every existing memory system will give a reasonable answer to that question and miss the point entirely. The earlier message about the exam is the answer. But it's not factually similar to a question about a TV show. There's no keyword overlap. There's no obvious topical match. The memory exists. The retrieval doesn't surface it.

We call this the cognitive memory problem. The relevant memory isn't a fact to be looked up. It's a latent constraint that should change how the system responds, such as a goal, a state, a previous decision, a regulatory restriction, or a preference. Something the user told you once that should now be shaping behavior, even though they're not asking about it.

The enterprise version of this is more expensive than the consumer version. An AI agent recommends featuring Project Titan in a customer case study, but doesn't surface the NDA prohibiting external mention. An agent suggests a vendor and doesn't surface the procurement freeze announced two quarters ago. An agent drafts a regulatory filing without surfacing the legal team's prior position on the same question. In each case, the relevant memory exists in the company's data. The AI just had no way to know it was relevant to this question right now.

This is the failure mode that quietly causes the most damage in production. It doesn't look like a wrong answer. It looks like a confident, plausible answer that happens to violate a constraint nobody remembered to encode in the prompt.

Most memory systems can't solve this because they treat memory as a retrieval process. You ask, they search, and they return the most similar text. If the relevant memory doesn't look like the question, it doesn't surface. The whole pipeline is built around surface similarity.

We think solving this requires treating memory not as a search index but as a system that anticipates how each piece of information might become relevant in the future. That's a different architectural problem, and it's the one that drove most of the design decisions in Reasonara.

This is the gap we set out to close. We call this Reasonara.

Introducing Reasonara

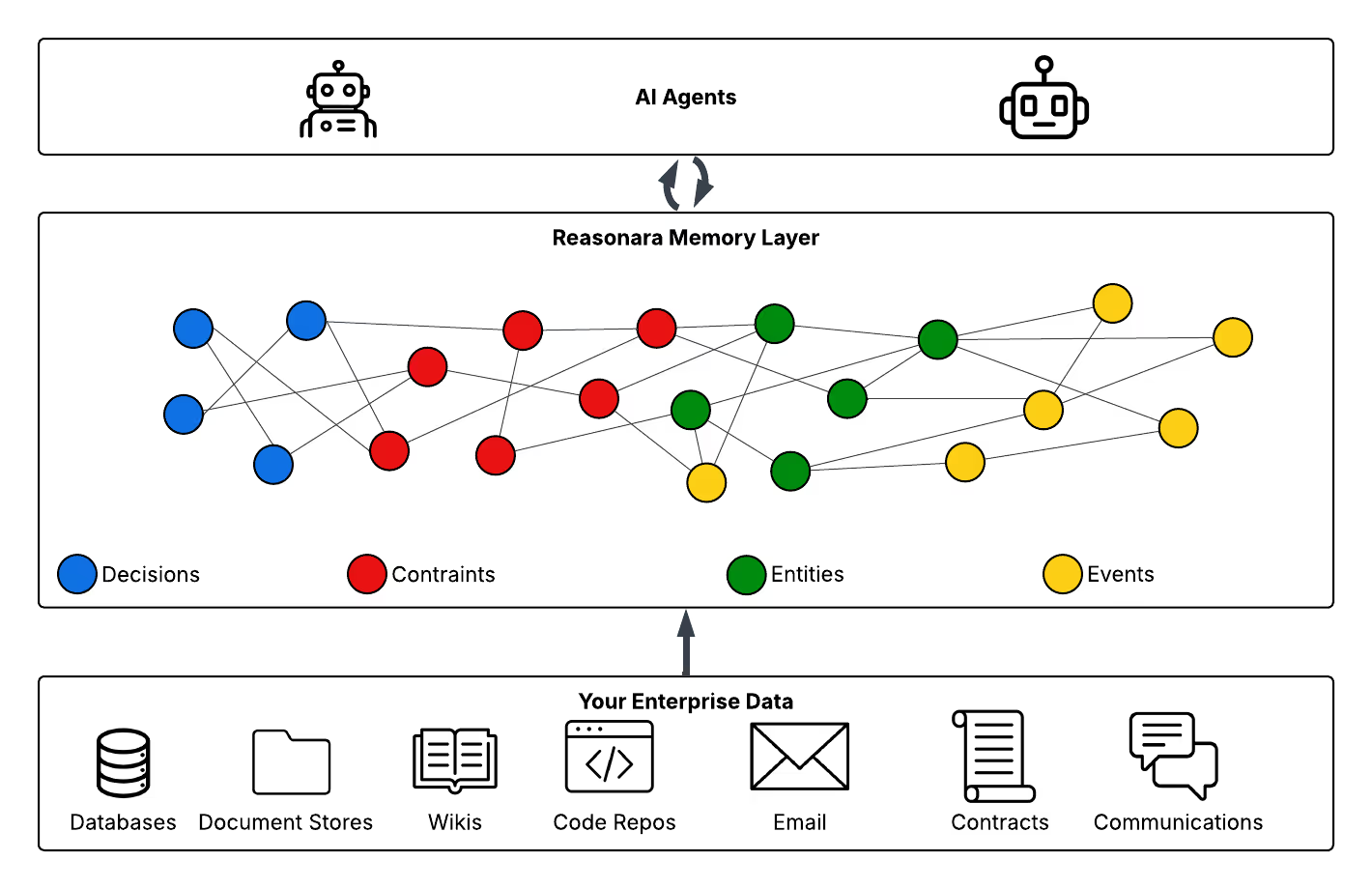

Reasonara is a memory layer for agentic AI. It sits between your company's data and the AI models acting on it. It does one thing: builds and maintains a structured map of how everything in your business is causally connected, such as decisions, documents, workflows, outcomes, and surfaces the right slice of that map whenever an AI agent needs to act.

Reasonara's name stems from Physics. In a standing wave, frequencies that reinforce each other create stable, persistent patterns. Frequencies that interfere with each other cancel out. Reasonara's memory works similarly: causal patterns that recur across your data, such as the same constraint, the same decision, the same dependency, reinforce one another and stay loud. Patterns that don't recur fade. The result is memory that reflects what actually matters in your business, not just what was written down most recently.

Reasonara is:

Automatic. No manual tagging, no schema design, no data migration. Reasonara reads what you already have and organizes it.

Structured. Not a pile of text snippets behind a search box, but a navigable graph of entities, relationships, decisions, and constraints. The structure is the product.

Agentic-native. Built for AI agents that need to reason across many documents and many time periods to take an action. Not for one-shot question answering.

To explain how this works in practice, here's what's inside Reasonara.

How it works

Reasonara has four layers: data at the bottom, organization in the middle, and queries at the top.

Connect to data where it lives. Reasonara connects to your existing enterprise sources such as databases, document stores, wikis, code repos, e-mail, contracts, and Slack without data migration. It deploys inside your own cloud environment, so your data never leaves your security boundary.

Extract the patterns that already exist. Reasonara reads across that data and pulls out the latent structure: who decides what, what depends on what, what constraints apply where. Every company already has this structure. It's just scattered and invisible. This layer makes it visible.

Build a structured memory. The patterns get organized into a typed graph. Each node is a unit of meaning: a decision, a constraint, an event, a goal. Each carries both a short concept label (how the memory gets found) and the full underlying detail (what's used to answer). When new information comes in, it merges into an existing concept or creates a new one. Memory consolidates rather than fragments: a hundred raw mentions of "Alice's career" become one coherent entry. The graph also captures how nodes relate — what causes what, what follows what, what contradicts what. This makes the memory navigable rather than just searchable.

Retrieve with policy, not just similarity. When an agent asks Reasonara a question, Reasonara runs a learned policy that decides whether to refine the question, expand into related concepts, or stop and answer. Simple lookup, stop early. Hard multi-hop question, expand. The agent doesn't search the memory — it asks the memory, and the memory decides how hard to look.

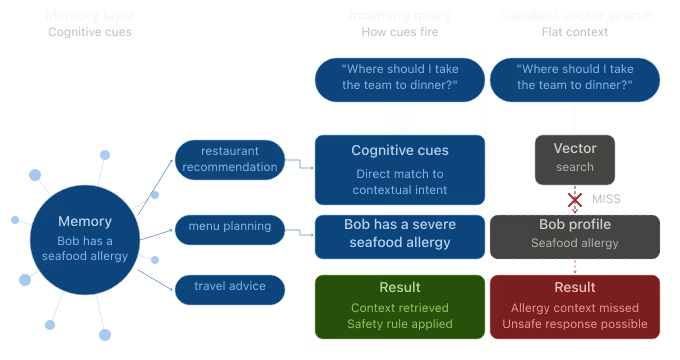

One feature is worth pulling out, because it's how Reasonara addresses the fourth failure mode from earlier — memory that should speak up but doesn't. Each memory carries a small set of cognitive cues: short phrases describing future situations in which this memory should resurface. A memory about a user's seafood allergy might carry the cue "restaurant recommendation." Later, when an agent is asked to recommend a restaurant, that allergy memory surfaces. Even though "seafood allergy" and "restaurant recommendation" share almost no surface similarity. The cues are written when the memory is stored, not at query time. The memory was already prepared for the question.

This is also what makes the enterprise constraint cases — the NDA, the procurement freeze, the prior legal position — surface when they matter. Cognitive cues turn those from things buried in your data into things the memory layer actively raises.

That's the architecture. Here's how it performs.

Results

Memory and retrieval is a fast-moving field, with serious work coming out of frontier labs, open-source projects, and academia every month. Here's where Reasonara lands today, on the benchmarks the community uses to evaluate this work.

Long-conversation memory (LoCoMo). LoCoMo tests whether a system can remember and reason over long, multi-session conversations — single-fact recall, multi-step reasoning, time-sensitive questions, and open-ended ones. Reasonara scores 93.3% on the LLM-judge metric, ahead of the strongest published alternatives.

The headline number matters less than where the gap is biggest. On multi-step reasoning questions — the ones that require connecting evidence from different parts of a conversation — Reasonara reaches 0.967, against 0.787 for the next-best system. On open-ended questions, where the right answer depends on the broader context rather than a specific fact, Reasonara reaches 0.781, compared to 0.594. Those are the question types that most closely resemble real enterprise work.

Cognitive memory (LoCoMo-Plus). LoCoMo-Plus is the benchmark designed to test whether a system can apply latent constraints from earlier in a conversation to a later question that doesn't explicitly invoke them. The exam-prep example we opened that section with is exactly this kind of test.

The whole field, including frontier models on their own and existing memory systems, sits between roughly 15% and 26% on this benchmark. The strongest non-Reasonara result is Gemini 2.5 Pro at 26.06%. Reasonara reaches 72.82%, a gain of about 47 percentage points over the best baseline. We were genuinely surprised by the size of the gap when we first ran the evaluation. The fact that the gap is this large suggests cognitive memory isn't just a harder version of retrieval. It's a different problem. The architecture you choose actually matters more than the model you put behind it.

What this means for cost. Reasonara uses fewer tokens per query than full-context approaches dramatically, because the memory layer surfaces a small, precise slice of context instead of stuffing everything into the model's window. For enterprises running AI at scale, that's a direct cost reduction.

What this means for capacity. Reasonara handles enterprise-scale working memory today, with a clear engineering path to larger scale. This is the working knowledge of an entire enterprise, not a single project.

A note on the benchmarks themselves: full-context inference is just stuffing everything into a long-context model and scored 82.5% on LoCoMo, which is closer to Reasonara than most marketing materials would admit. Full context is a real baseline. The reason it's not the answer is cost and latency at scale, not raw accuracy on small corpora.

Numbers explain what Reasonara does. Here's why we think this is the moment to build it.

Why now

Three things have changed in the last eighteen months.

Models are commoditizing. Every enterprise has access to roughly the same frontier models, and the gap between the best and second-best closes every quarter. The advantage no longer comes from picking the best model. It comes from giving any model the best context.

Context windows aren't enough. Even a million-token window can't hold a real enterprise knowledge base, and stuffing everything in is prohibitively expensive at scale. More importantly, accuracy degrades as context grows and models lose track of what's in the middle of long inputs. Bigger windows are not the same as better memory.

Simple retrieval has hit a ceiling. Vector search works for small, contained use cases. It breaks down when AI agents need to reason across multiple documents and multiple time periods, which is exactly what agentic systems do. The next layer of capability is structural, not statistical.

Gartner predicts that by 2028, more than half of enterprise AI agent systems will rely on context graphs. We agree. Every enterprise deploying AI agents at scale will need this layer. The only question is whether they build it themselves — which most can't, at the depth required — or buy it.

The AI models work. The data is already there. What's missing is the memory infrastructure between them. That's what Reasonara is.