Blog

Why AI Makes You 20x Slower

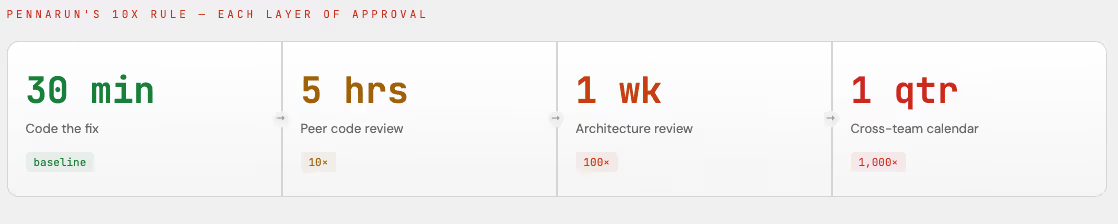

"Every layer of approval makes a process 10x slower. Not the effort, wall clock waiting."

That's Avery Pennarun, the CEO of Tailscale, the composer behind some of the best methods on designing engineering organizations. His methods sound like exaggeration until you really think about them.

Fixing a Bug takes 30 minutes. Peer review of your code takes half a day. Sending and receiving feedback of design docs to architects takes a week. Dependency review with impacted teams takes a quarter to get scheduled.

AI doesn't fix these wait times. Claude writes the code in 3 minutes instead of 30 minutes which is great! However, the reviewer still takes half a day, design review is a week, and cross-team dependency still takes a quarter.

AI speeds up one step but the pipeline does not care.

The Toyota Lesson

Toyota figured this out decades ago. They became better by making QA redundant by integrating quality engineering into the development process. Edward Deming showed them that every inspection layer slows down the overall process while degrading quality. The pipeline moves to a "pass the blame" mentality. The production team stops checking their work because "that's what QA is for". The first QA team relaxes because the second QA team will catch what they miss. Accountability dissolves across layers because each team along the way just lets the problems move down the pipeline.

When US auto manufacturers tried to copy this philosophy by installing the "stop the line" buttons, nobody pushed them. Why? The culture wasn't there and most importantly the trust wasn't there.

These same parallels are showing in software.

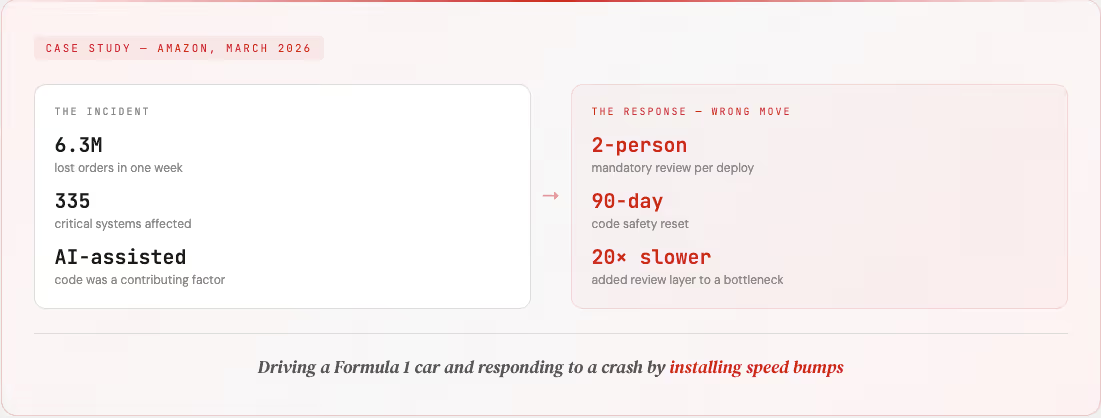

Amazon just announced a 90-day "code safety reset" across 335 critical systems after AI-assisted code contributed to outages that wiped out millions of orders in a single week.

The response? A mandatory two-person review for every deployment, formal documentation gates, and more approval layers. By Pennarun's math, Amazon now works 20x slower. They took an already bottlenecked pipeline and doubled the reviews. This just compounds the problem instead of providing a fix.

Amazon's own internal docs admitted the real issue: their safety guardrails were inadequate for the speed at which AI generates code. But instead of fixing that, they slowed the pipeline down to match the old guardrails. They're driving a Formula 1 car in a parking lot.

Comprehension Debt

Addy Osmani is an Engineering Director at Google AI. He authored the most widely-read blog on frontend architecture named The Deeper Disease: Comprehension Debt — the growing gap between how much code exists in your system and how much of the code any human actually understands.

Unlike technical debt, comprehension debt is invisible. Velocity metrics look great. DORA metrics hold steady. Tests are green. And nobody can explain why that architectural decision was made eight months ago or what breaks if you touch the system.

This connects Pennarun's review problem to something more fundamental. Reviews were never just a quality gate — they are a mechanism for distributing knowledge.

When a human wrote the code and another human reviewed the work, understanding spread. AI-generated code breaks that feedback loop. The volume is too high, the output looks clean, and the merge confidence signals are all there — but surface correctness isn't systemic correctness.

And here's the inversion that should worry everyone: a junior engineer can now generate code faster than a senior engineer can critically audit.

The rate-limiting factor that kept review meaningful has been removed.

This is what verification debt is. Every review gate that exists because you can't predict what your system will actually do in production is not quality assurance. Review gates are an admission that you don't understand your own system well enough to trust the process. Comprehension debt and verification debt are two sides of the same coin. One is about humans losing understanding of the code. The other is about losing understanding of what that code will do to your production system.

.avif)

The Real Fix

Amazon didn't have one incident, they had a trend. Because the gap between code velocity and production understanding kept widening. More reviews won't close that gap. They'll just make you 20x slower while the gap keeps growing.

The solution isn't faster coding or better reviewers. The solution is building a causal understanding of your production systems that make reviews unnecessary. There isn't a correlation or pattern matching. Instead both machine and human will actually know what will happen and why.

Don't be the team where stacking review layers on top of AI slop will continue to break production and keep everyone wondering why nothing feels faster. Be the team that will dominate the world by having machine and human work in harmony.

References

- Business Insider, "Amazon Tightens Code Controls After Outages Including One Tied to AI"

- Avery Pennarun, "Approval Layers and Engineering Velocity"

- Addy Osmani, "The Deeper Disease: Comprehension Debt"