Research

Cielara Code: Graph-Guided Navigation for Coding Agents

Cielara Code is an autonomous coding agent that gives the model a structural map of the entire codebase before any work begins. Across three benchmarks, it improves accuracy by 3.6% over leading agents, runs ~10% faster, and uses 30–40% fewer tokens.

Today, Causal Dynamics Labs is announcing Cielara Code, an autonomous coding agent built on Cielara, our software-engineering production world model, and powered by REASONARA™, our graph-structured memory system. Cielara Code's central design choice is simple. Before the agent reads a single file, it already has a structural map of the entire codebase. This changes how to search, how much information to search through, and how often the agent lands on the right code on the first try.

Most of the public conversation about coding agents focuses on the model. Bigger context windows, smarter reasoning, longer chain of thought. We took a different bet. We think the bottleneck for production coding agents is not just reasoning — it's navigation. An agent that finds the right code quickly will outperform a smarter agent that wastes time wandering, and our benchmarks prove it.

Autonomous Agents are Flying Blind

Think of "localization" as the part of bug-fixing that happens before any code is written. The agent has to figure out which files and functions are actually relevant. This is where most production agent failures occur. Internal analysis of failed agent runs shows that roughly 82% of failures are navigational rather than reasoning errors. The agent is not failing to think; the agent is failing to find.

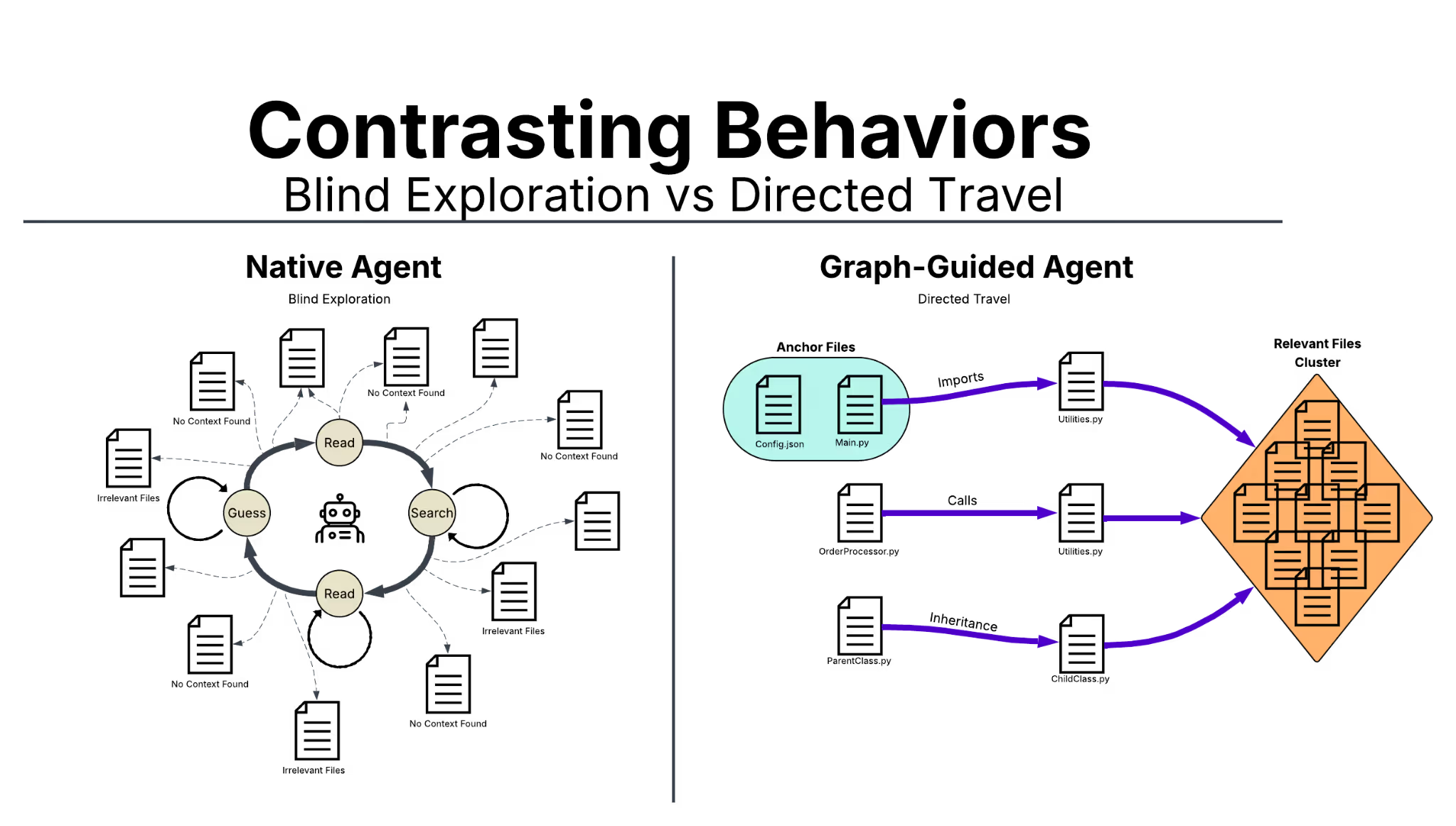

A modern coding agent's working memory is limited, and a large production codebase is far too big to fit at once. So files get read, set aside, and read again. Each round trip costs tokens (the basic unit of text that AI models charge for), and structural understanding is lost between reads. The result is what we call the "read, search, read, guess" loop that defines most coding agents on the market today.

How Cielara Code works

Cielara Code's core component is a Code Dependency Knowledge Graph — an automatically generated map of the codebase. Every file and function becomes a point on the map, and the lines connecting those points show four kinds of relationships: which files import each other, which functions call each other, which classes inherit from each other, and which files contain what. The graph is built in advance and served alongside the agent's normal search tool. We think of this as using GPS to navigate to our destination, rather than driving down every street in a city until we find it. Cielara Code opens the GPS first, narrows the search to a neighborhood, and only then drives the last block.

Concretely, when the agent runs a search, it lands on a small number of high-precision starting points on the map. From each starting point, Cielara Code surfaces the related files in a few steps: the functions that call it, the modules that import it, and the classes that build on it. The agent receives this structural evidence inside normal search results, not through a separate tool. That integration matters. Its exploration loop stays the same, so the design works across different model architectures without retraining the agent.

Underneath the graph sits Reasonara, the memory layer that makes everything work at production scale. Reasonara maintains a structured representation of the codebase within a 125 million-token context window, which is sufficient to fit entire production codebases without breaking them into pieces. When the graph surfaces a relevant file, Reasonara delivers it in roughly 100 to 400 tokens of focused, structurally organized context, rather than the 3,000 tokens a typical agent would consume reading that same file from scratch. That works out to roughly 1/5 the noise per lookup, which compounds across an issue that may require dozens of reads.

Beating the Benchmarks

We evaluated Cielara Code on three independent benchmarks. SWE-Bench tests real GitHub issue resolution. MULocBench evaluates 1,033 multi-file issues across 46 Python repositories. LocBench stress-tests both code and non-code localization. Using three benchmarks was a deliberate choice. A single benchmark is easy to over-optimize for, but consistent gains across three suggest the improvement is structural rather than dataset-specific. Cielara Code reaches 0.774 accuracy, a 3.6% improvement over Claude Code (Opus-4.6) and 6.7 points over Codex (GPT-5.2). On recall, the lead is 3.0 and 6.7 points, respectively.

MULocBench in detail

The first-attempt accuracy margin between Cielara Code and Claude Code on MULocBench (0.677 vs. 0.676) falls within statistical noise across 1,033 instances. The meaningful separation appears on the broader top-five metrics, where Cielara Code leads by 3.5 and 2.5 points, respectively. These metrics reward an agent that consistently finds the right code across multiple plausible locations, not just on the first guess. Production issues rarely live in a single file, so the broader-coverage metrics translate most directly to real-world reliability. The pattern is consistent across both metrics, which suggests a genuine structural gain in navigation rather than variance in individual predictions.

Faster and more efficient

By eliminating the "read, search, read, guess" loop, average wall-clock time per instance drops from 141.84 seconds to 128.62 seconds, roughly 10% faster. More importantly, the same code is produced using 30–40% fewer tokens because the agent no longer incurs costs for navigational dead ends. Fewer iterations means fewer model calls, which translates almost directly to lower API costs per resolved issue at any reasonable scale. For organizations running thousands of agent sessions per day, the per-session savings compound into meaningful infrastructure cost reductions without any tradeoff in output quality.

Tech Debt Divide Accelerates Into A $85 Billion Problem

As AI continues to grow into everyday life, the silent killer of many companies grows in the shadows. Every IT department fears tech debt. Stripe's Developer Coefficient report estimates that engineers spend ~42% of their workweek managing technical debt, resulting in about $85 billion in lost global productivity each year. AI created two new forms of tech debt: verification and cognitive debt. AI coding agents were supposed to reduce that burden. In practice, agents deployed without structural navigation tend to accelerate debt accumulation. The agents generate syntactically correct code that passes tests but fails to respect the architecture being modified, producing changes that ship green and revert within two weeks. Better navigation is not a nice-to-have. Better navigation is the difference between AI that saves money and AI that quietly drains budgets.

Verification Debt

When machine-generated code enters a codebase faster than humans manage to review the output, organizations accumulate verification debt — the widening gap between code deployed and code that humans actually understand. Unlike traditional technical debt, verification debt creates a false floor of security. AI-generated code is syntactically perfect, follows formatting guidelines, and passes unit tests, which triggers false confidence in reviewers who approve pull requests not because they understand the code, but because the automated checks return green.

Researchers describe three stages of accumulation. Dilution is the first stage, in which AI-generated code enters the codebase and is reviewed superficially. Fragmentation is the second, in which no single engineer comprehends the full system, and debugging requires cross-team coordination. Opacity is the third, where the codebase becomes a black box, and organizations face costly re-immersion penalties whenever something breaks.

Cognitive debt

Verification debt is the organizational symptom. The root cause is cognitive debt — a state of continuous output that feels productive but produces no learning. Six months later, when the code breaks, no one remembers the decisions or data-flow patterns that shaped the system.

An Anthropic study monitoring 52 engineers learning a new software library found that participants using AI assistance scored 17% lower on follow-up verification quizzes than the control group, with the largest drops in debugging and active code reading. A separate METR study of experienced developers quantified the perception gap precisely. Actual performance dropped 19% on complex tasks, even as developers reported feeling 24% faster — a 2.5x rework multiplier.

Human-centric ROI

Cielara Code addresses these forms of debt at the root by giving the agent a structural map of the codebase before exploration begins. Three properties of graph-guided changes reduce review burden:

- Smaller blast radius: the agent navigates to the precise files involved rather than reading dozens and guessing, so diffs touch fewer unrelated modules.

- Architecturally coherent diffs: changes respect dependency boundaries, so reviewers do not have to mentally reconstruct the module graph to understand what changed.

- Fewer rework cycles: when code survival rates increase — with patches staying in production past 30 days instead of being reverted within 14 — each review round is more likely to result in a merge.

The 30–40% reduction in iterations also means fewer patches for humans to evaluate. The true measure of an AI coding agent is not how much code it produces, but how little human time it wastes.

Commitment to Excellence

Building a production system for graph-structured code navigation that consistently improves performance across 46 diverse codebases is a significant challenge, despite the technique's conceptual simplicity. Cielara Code addresses this with four interconnected pillars:

- Structural Gains Confirmed by Benchmarks: We don't settle for a single leaderboard. The Causal Dynamics Lab's performance is validated against industry-standard benchmarks, including SWE-Bench, MULocBench, and LocBench.

- Localization as the Foundation for Agent Capabilities: Superior code localization is a critical platform wedge, serving as the necessary precursor for all subsequent downstream agent functions, including patch generation, test writing, refactoring, and code review. By mastering "where" to make changes, Cielara creates a natural expansion path to a fully autonomous development pipeline.

- Compounding Data Advantage: The system's understanding of real-world code patterns deepens and accelerates as Cielara indexes more codebases.

- More Context, Better Solutions: Cielara's performance is due to Reasonara, our memory infrastructure that offers a 125-million-token structured context window, with a roadmap to scale beyond 1 billion.

Where to How

Cielara Code establishes a new state of the art in the "where" — the roadmap turns to the "how" by generating correct, production-grade patches. We are working on critic models that learn from the results of unit tests, which indicate whether our code changes succeed or fail. By tracking rewards through the model's decisions, the system identifies which choices led to a successful update.

We are also working on dynamic runtime tracing. This technique has been shown to increase success rates from 77.4% to 83.4% for Django and SymPy by surfacing dependencies invisible to static analysis. Finally, we are extending our research into multi-codebase reasoning for microservice architectures — an area that existing benchmarks do not yet test. Current benchmarks evaluate a single codebase at a time, and as the industry continues moving toward distributed systems, new benchmarks will be required. Cielara's graph-based architecture is positioned to extend into that space as the field catches up.

We've mastered the "where." We're coming for the "how."