Blog

AI in 2026: The Rise of World Models

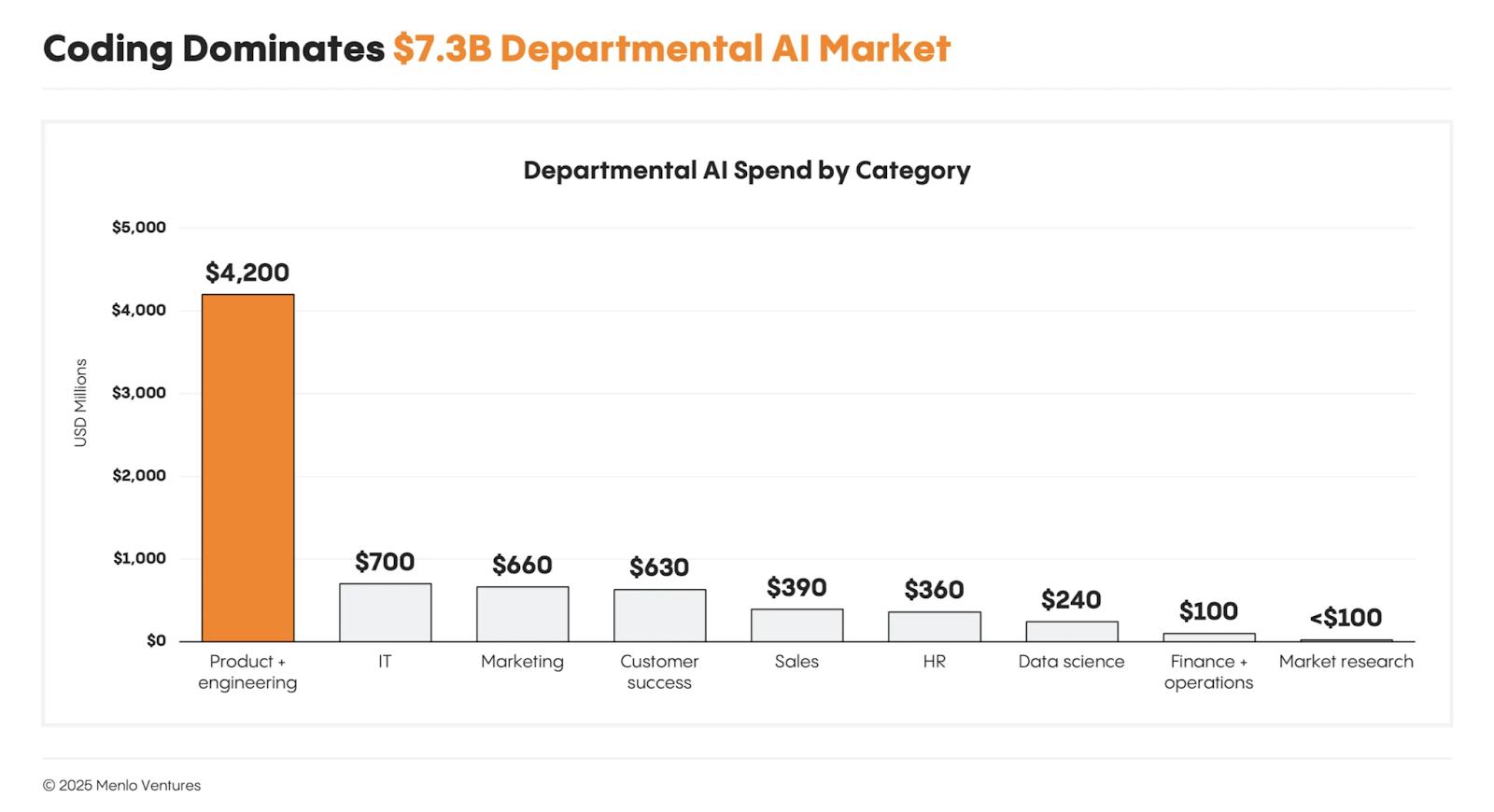

AI's Big Winner in 2025: Coding Agents

2025 was marked by major leaps in AI for software development. Large language models became far better at reasoning through complex problems, and "AI agents" were hyped as the next big thing. Many dubbed 2025 the "Year of the AI Agent", anticipating autonomous assistants that could handle everything from web research to booking travel. However, reality fell short of the buzz — most general-purpose agents remained experimental, and much of the talk didn't translate into real production use.

The one clear success story was in the realm of coding agents. AI coding assistants like GitHub Copilot, Cursor, OpenAI's code models, and others truly delivered value to developers at scale. By early 2025, over 15 million developers were using GitHub Copilot (a 400% increase in one year), and on average, it was generating nearly half of all code written by those users. In some cases (e.g. Java projects), AI was writing 60%+ of the code for developers. This widespread adoption of coding agents massively accelerated development speed and productivity.

Why did coding agents win in 2025? First, their utility is tangible — they help write and complete code, a daily developer task, with impressive accuracy. Second, they matured from mere autocompletion into intelligent pair programmers. Developers found they could build features faster, automate boilerplate code, and even get help with bug fixes and code reviews using these AI assistants. GitHub reported that teams using Copilot were completing tasks significantly faster, and pull request cycle times dropped sharply (from ~9.6 days to 2.4 days on average in one study) when AI assistance was in the mix.

At the same time, this "uncorking" of code velocity introduced new challenges. With AI generating code at lightning speed, verification and quality assurance became a pressing concern. Developers joked that code review sometimes took longer than writing the code itself — and in many cases, this was true. Amazon CTO Werner Vogels coined the term "Verification Debt" to describe this crisis: AI can produce code faster than humans can properly understand, review, and verify. In his AWS re:Invent 2025 keynote, Vogels warned that verification debt would be the #1 challenge of AI-assisted coding in 2026. Early data backs this up — according to the latest DORA report from Google, heavy AI adoption was correlated with a 7.2% decline in software stability.

Prediction 1: Coding Agents Get Even More Powerful and Responsible in 2026

In 2026, we can expect AI coding agents to become even more mature, capable, and deeply integrated into development workflows. The foundational models behind these agents continue to improve in reasoning and context-handling, meaning the AI will do more of the heavy lifting in coding tasks. We're likely to see coding agents that can handle larger projects and multi-step tasks autonomously.

New dev assistants are emerging that can implement a feature from a natural language spec, generate tests, update config files, and even orchestrate changes across multiple repositories. Early signs of this were visible in 2025 — an AI tool called Kiro demonstrated multi-step coding capabilities (following specs, generating tests, and learning from code reviews) and dynamic tool integration. In 2026, such intelligent automation will become more common. We might finally get true AI pair programmers that don't just suggest code, but can open a pull request, run the CI/CD pipeline, and remediate simple errors on their own.

.avif)

However, with great power comes great responsibility — and a greater need for trust. Developers in 2026 won't just be asking "Can the AI write this code?" but also "Should I trust this code?" and "How do I ensure it won't break anything?"

.avif)

The next generation of coding agents will likely put more emphasis on verification, testing, and safety checks built-in. GitHub is integrating AI into code reviews and vulnerability scanning, and open-source agent frameworks are exploring ways for AI to debug or verify its own output before handing it off. Expect 2026 to bring "guardrails" around AI coding — features that simulate the code's execution or check its consistency with specifications, so that the agent's output is more reliable out-of-the-box.

Prediction 2: World Models and Autonomous Reliability — The Next Frontier

If 2025 was about speeding up code creation, 2026 will be about keeping that code under control. The key to this will be the rise of world models in software engineering. What is a "world model" in this context? It's essentially a live, digital replica of your software systems — a continuously updating model of all your code, configurations, infrastructure, and their interdependencies. Think of it as a digital twin of your entire application environment.

By 2026, we anticipate that world models will become invaluable for companies dealing with complex, AI-fueled systems. Startups like Cielara are pioneering this approach: Cielara builds a live map of an organization's cloud and codebase, mapping every service, workload, and dependency in real time.

This rich map is not just for visualization — it's used as a simulation engine. With a world model in place, you can simulate the impact of any code or config change and see what would happen before it actually happens in reality. You can road-test your code in a virtual copy of your system, catching failures before they occur in production.

We predict that in 2026, this concept of "pre-deployment simulation" will become a best practice for any AI-heavy software team. When an AI coding agent (or a human developer) proposes a change, you'll run it through your world model first. The world model, powered by causal AI reasoning, will evaluate that change in context — it will understand who made the change, what systems it touches, and how it might ripple through the architecture. It's like having a virtual sandbox with full fidelity: if a configuration tweak would knock out your payment service, the model will raise a red flag before you merge that PR.

These simulations aren't just simple unit tests or lint checks; they consider the complex graph of dependencies in modern cloud-native apps. By analyzing the graph of cause-and-effect in software systems, a world model can predict even second- and third-order consequences of a change. Teams will be able to ask: "If we upgrade Library X or change API Y, what other services could break?" and get deterministic answers.

World models shift us from reactive ops to proactive ops. Instead of waiting for a crash and then scrambling, companies will use world models as a kind of "autopilot" for reliability, preventing crashes in the first place. This marks the birth of a new category some are calling Autonomous Reliability.

Causal Dynamics: Building the AI-Native Future of Engineering

All of these trends point toward an exciting vision for developers and startups in 2026. Software engineering is adapting to an AI-native reality: AI writes much of our code, so we need AI-assisted ways to manage and maintain that code. This is exactly the space we at Cielara AI are tackling with our focus on causal dynamics. By leveraging causal inference and dynamic modeling, our platform helps developers regain control over the rapid pace of AI-generated changes.

Imagine going into 2026 as a developer: You still use your favorite coding agent to build features at 10x speed. But now you also have a safety net. Every time you or the AI makes a change, your world model instantly runs a check. It might say: "Hey, this API call will likely overload service Z and could crash production — how about we fix that now?"

Your tools could even automatically generate a fix or an optimized deployment plan. This is not science fiction — it's the natural evolution of the SDLC in the AI era. The endgame is autonomous reliability: systems that largely manage their own stability, with AI predicting and preventing outages in real-time.

Real-World Use Cases

A Retail Company Avoids a Black Friday Outage

A large retailer prepares a pricing update days before Black Friday. Before the change is deployed, the system automatically simulates it against a live digital model of the retailer's infrastructure and detects that the update would overload a downstream inventory service under peak traffic. The issue is flagged days in advance, allowing the team to fix it calmly — resulting in no outage, no revenue loss, and no emergency war room during the most critical shopping window of the year.

Faster Product Launches Without Slowing Teams Down

A SaaS company wants to ship features weekly instead of monthly. With a world model in place, every release is "rehearsed" before going live, allowing the system to predict whether a change will degrade performance, violate security policies, or break dependencies across teams. As a result, leadership gains the confidence to approve faster launches, and engineering teams move forward without endless debates over whether a release is "safe enough."

Leadership Finally Gets Predictable Reliability

For executives, reliability has long felt reactive and unpredictable. World models change this dynamic by allowing leaders to see reliability risks before launches, quantify potential business impact, and make informed trade-offs between speed and safety. As reliability becomes measurable, predictable, and governable, surprises in board meetings and earnings calls become far less common.

Institutional Knowledge Stops Living in People's Heads

When senior engineers leave, critical knowledge often leaves with them. A causal world model continuously learns how systems behave, how teams deploy, and what typically causes failures — gradually becoming a living institutional memory of how the company's software actually works.

What to Expect in 2026

2026 is poised to be a year where developers gain superpowers — and new safety systems to harness those powers. AI coding agents will be writing more code than ever and doing so more intelligently, so embrace them as your teammates. At the same time, be prepared for a cultural shift towards preventative engineering. The best engineering teams will treat reliability not as an afterthought, but as something to design for from the start using simulations and causal models.

Looking ahead, it's clear that the next phase of the AI software stack won't be defined by how fast we can generate code, but by how safely we can operate it at machine scale. This is where causal, autonomous reliability becomes foundational. The mantra is no longer "move fast and break things" — it's "move fast, understand the consequences, and break nothing." That is the future of AI-native software — and it's the future we're building toward.

Sources

- AI2 Incubator (2025). State of AI Agents in 2025: Hype vs Reality

- GitHub Copilot Usage Statistics (2025). Report on Copilot Adoption

- Vogels, W. (2025). AWS re:Invent Keynote — The Renaissance Developer

- Dorcey, M. (2025). How Generative AI Is Changing Software Development

- Google Cloud & DORA (2025). State of AI-Assisted Software Development Report

- AWS re:Invent (2025). Day 3 — Infrastructure Innovations & Werner's Renaissance Developer